🔮 Genome architects; Chinese cables; sun & the data centre; rising rivals; hair juche & Schrodinger’s AI cat ++ #480

Hi all, welcome to the last Sunday edition of this month.

I spent the week in NYC, where I had a number of meetings and speaking engagements. On the back of last week’s Sunday edition (which got picked up by Elon on X…) about the collapse of traditional news outlets, I had a number of conversations with some of the leading figures of New York & British media on AI and the changes to that industry. It’s got me thinking, which will get me writing on this soon enough.

This week we also saw Estonia’s prime minister Kaja Kallas be selected for one of the top jobs in the EU. Her worldview is very much shaped by the Soviet occupation of Estonia, the struggles to win back independence and build a resilient modern society. To understand where the EU’s new foreign policy chief comes from and how she thinks about the future, see my conversation with Kallas from last year:

Sunday chart: GPTs are GPTs?

One chart to end your week

Back in March last year, we highlighted a preprint paper that suggested that GPTs are general-purpose technologies. Well, that paper has now been officially published. (From here on, I'll refer to Generative Pretrained Transformers as LLMs and General Purpose Technologies as GPTs to avoid any mental gymnastics).

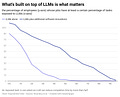

GPTs are transformative innovations that significantly impact the entire economy, much like steam power, electricity, and computers before them. They're characterised by a pervasive impact, continuous improvement, and the ability to spawn complementary innovations. The study finds that LLMs fit this bill: when paired with current and future complementary software, they could significantly impact over half the tasks in 46% of jobs. Without these complementary technologies, only about 1.8% of jobs would be similarly affected. This huge difference highlights the GPT nature of LLMs, as their impact grows dramatically when paired with other innovations. Of course, this is still an estimate of what they could achieve, whether greater or less.

But the potential for it to be greater does seem to be there. Big Tech companies remain extremely bullish on the scaling game - what do GPT-5, 6, and 7 hold? This rapid advancement raises questions about the economic impact. Anthropic CEO Dario Amodei suggests we must completely rethink our economic organisation, arguing that even universal basic income may not suffice. However, let's not get ahead of ourselves. We're still far from a future of techno-feudalism or fully-automated luxury communism. No dramatic system change will happen overnight - there will be incremental steps, as technology isn’t separate to society, but is an integral part of it. In fact, we should reframe our thinking about UBI and similar social support measures.1 Rather than viewing them as post-hoc solutions to technology-induced problems, we should see them as catalysts for technology adoption. By providing a safety net, we can encourage people to embrace new technologies without fear of economic insecurity. For LLMs to fulfil their GPT potential, we must inspire people to embrace them, not fear them.

Spotlight

Ideas we’re paying attention to this week

Data gloom or solar boom? Bloomberg reports that AI data centres could use 8% of US power by 2030, which could mean years-long waits for grid connections and outages in dense data centre markets. It’s a pretty gloomy picture. On the other hand, the New Yorker shows that California, the world's fifth-largest economy, often generates more than 100% of its electricity from renewables. This is a sign of things to come: big economies driven by renewables. In fact, globally, installed capacity is doubling every three years. The data centre boom will likely be a temporary problem. The Bloomberg projection may overstate the issue by extrapolating linearly, without fully accounting for dynamic changes in energy mix, efficiency improvements, and evolving economics of both data centres and energy production. And at the end of the day, we will need the computation in these data centres to solve climate change. Ultimately, we need to use these data centres to solve climate change. While a lot of energy will be used to generate Genmojis, it can also be used to reduce contrails and other emissions.

From word processor to genome architect. Imagine DNA as a book. Traditional gene editing is like a word processor, changing words or short phrases. Now, scientists at Arc Institute have discovered “bridge RNAs,” molecular guides that direct enzymes to cut, paste, and rearrange entire paragraphs or chapters of DNA. This breakthrough enables large-scale genomic rewrites, not just small edits, like CRISPR. Complementing this, Arc Institute has also developed Evo, a 7-billion parameter AI trained on millions of bacterial genomes. Evo is like an advanced language model for biology, capable of reading and writing in DNA, RNA, and protein “languages” simultaneously. With bridge RNAs providing precise editing tools and Evo the predictive and generative power, we can now edit existing genomes with unprecedented flexibility and potentially design large genomic sequences. This powerful combination could revolutionise everything from treating genetic diseases to engineering microbes for sustainable technologies, opening a new chapter in biological engineering.

Why is Jensen Huang so worried? Nvidia’s CEO, despite riding high on AI’s surge, is looking over his shoulder at potential threats to the company’s dominance. Enter Sohu, a new player in the AI chip arena causing a stir. This ASIC (Application-Specific Integrated Circuit) designed to accelerate transformers (LLMs, essentially). Soho reckons that their chip was run the high-end Llama 70b LLM 20 times faster than Nvidia’s poshest model, the H100. Sohu’s specialised focus underscores the competitive landscape Huang fears, where purpose-built chips could erode Nvidia’s market share in specific AI applications. This development also ties back to our previous discussion on AI’s growing energy demands. Sohu’s chips are 15x more energy efficient, potentially reducing data centres' future energy consumption.

Cable wars. China is rapidly building its own undersea cable network, defying US sanctions and reshaping the global internet infrastructure. This “digital Silk Road” is creating a “one world, two systems” reality in global communications, challenging America's long-standing dominance in this critical sector.

See also: OpenAI has said it would block Chinese users’ access to its models. They already weren’t officially available there, but now they seem to be taking additional steps to block access through VPNs.

Data

Spending on Delta Airlines credit cards approaches 1% of US GDP.

Illegal migrants in Texas have a homicide rate 26% lower than native-born Americans, while legal immigrants’s homicide rate is 61% lower.

Support for Labour and the Tories is at its lowest since the two-party system emerged in the UK a century ago.

The share of US households volunteering for charity has declined from 34% in 2005 to 26% in 2001.

The rise of software is responsible for two-thirds of the decline in labour share in Korea from 1990 to 2018.

It is estimated that 10% of all research abstracts in 2024 will be written by ChatGPT.

As of March, OpenAI was reportedly generating approximately $1 billion in annualised revenue from selling model access to app developers like Salesforce, Klarna, and Jane Street, reaching this milestone before Microsoft’s competing Azure OpenAI Service.

Short morsels to appear smart at dinner parties

💇🏻 Trade in human hair is a lucrative moneymaker for the North Korea.

🔮 What will the climate be like when your child is an adult? This personalised timeline puts things in perspective.

🐸 Little saunas can help save endangered frogs from a devastating fungus.

🛰️ NASA selects SpaceX to destroy the International Space Station in the 2030s.

👹A curious look at how the 18th-century French media created a werewolf panic.

👀 Researchers create a (creepy-looking) movable robot face with living human skin cells.

End note

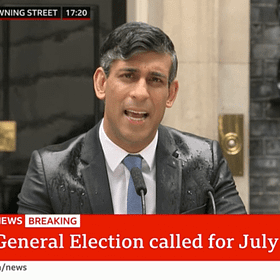

Next week is a big one for all of us in the UK: a General Election that will likely be record-breaking and perhaps lead to a political realignment. The energy levels will likely to be off the charts.

I’ve met the top team of the Labour Party a number of times in the past couple of years, and I’ve been impressed with what I’ve seen: the competence, character, and public spirit matching some well-thought-out and appropriate policies for the next several exponential years. The likely Labour government will bring some much-needed stability and future orientation lacking in the past decade or so.

On the day of the election (Thursday, July 4), there will be an open-channel discussion thread with all EV readers from Thursday into Friday.

❤️ Hit the like button on top or bottom of this email to let me know if you’d like to join the chat!

In preparation for the election, I recommend reading my recommendations for the next government:

🇬🇧 Seven exponential policies for a new government

Cheers,

Azeem

What you’re up to — community updates

Laure Claire and Benoit Reillier are running the Platform Leaders event on July 11 on Generative AI. You can register for free.

Nick Clegg has written an op-ed reflecting on the state of innovation in Europe.

Share your updates with EV readers by telling us what you’re up to here.

I’ll send an essay on this topic to Premium members in a couple of weeks.

I'd love to join the 4 July chat.

--

"For LLMs to fulfil their GPT potential, we must inspire people to embrace them, not fear them."

Will recent SCOTUS rulings make this harder by putting too much power in the judiciary or put pressure on Congress and the executive branch to step up?

Could you help clarify a point: What does 'significantly impact' mean in the sentence above on GPTs are GPTs: "... they could significantly impact over half the tasks in 46% of jobs." Does it mean 'reduce completion time by more than a half'? (ie. is the definition of 'significantly impact' the same as 'exposure' per the chart)?