🔮 AI’s ongoing mismeasure; standing between superpowers; Mrs Genghis Khan, Ozempic & happy Finns ++ #470

Hi, I’m Azeem Azhar. In this week’s edition, we explore the problem of mismeasuring AI and what to do about it.

And in the rest of today’s issue:

Need to know: The AI soft power

Microsoft’s sizeable investment in Abu Dhabi’s group G42 tells us much about how the US is navigating the politics of AI.

Today in data: High voltage

EVs have driven a $2.4 billion reduction in electricity rates for all consumers in the US in the 11 years to 2021.

Opinion: Will AI kill us all?

I highly doubt it, but this week’s podcast guest thinks we’re doomed. I invited Connor Leahy to understand his perspective.

🚀 Thanks to our sponsor: Sana, your new AI assistant for work.

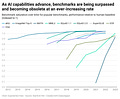

Sunday chart: Moving goalposts, unseen score

New York Times columnist Kevin Roose makes an interesting case that we don’t actually know how smart AI is because AI developers aren’t required to submit their products for testing before release. They simply pick and choose which information they make public.

We analysed the issue of measuring AI back in March 2023 when my colleague

wrote:existing benchmarks and evaluation techniques for AI contain numerous flaws that have been exacerbated with the rise of LLMs [...] Large language models are rapidly advancing in proficiency across various tasks, leading to the accelerated achievement of near-peak performance (often around 90%) on established benchmarks. The short lives of these benchmarks make it difficult for us to know exactly where we stand in the field of AI. It’s hard to know exactly where we are heading when the goalposts keep shifting.

There are many factors at play. Most importantly, we don’t have a strong definition of intelligence and how we could define a precise objective function for it. Even if we did, we lack the necessary tools to measure how well different AI models perform; benchmarks become obsolete very fast and don’t properly encapsulate AI capabilities anyway; and there’s no outside authority to systematically test all of these models. Our misknowledge of AI is problematic, particularly when it comes to governance — we need to find ways of making AI’s capabilities and failings legible and accountable.

That’s not to say they haven’t tried. Governments have attempted to make AI capabilities legible by developing compute thresholds. For example, the November 2023 US Executive Order requires disclosures for models trained on more than 10^26 FLOP1. The threshold acts as a proxy for a model’s ability to do harm. However, as Dean Ball points out, compute limits are not particularly future-proof. Newer versions of LLMs can already achieve GPT-4 performance on an order of magnitude less compute, and new architectures could deliver very powerful capabilities even more parsimoniously.

The way AI is made legible also matters when it comes to words. Matthijs Maas argues that the choice of metaphors when talking about AI holds “regulatory narratives”. For example, AI as a “field of science” will emphasise the need for transparency, knowledge-sharing and scientific rigour. AI as an “IT technology” implies business as usual and conventional IT sector regulation.

So where do we go from here? First, we need a standardised method of measuring AI to accurately represent model capability. This isn’t a quantification of intelligence (or a single objective function), rather a set of capabilities we can measure. Second, we need to encourage a thoughtful approach towards the vocabulary that’s used to denote those systems, and the politics that those words hold.

🚀 Today’s edition is supported by Sana.

Sana AI is a knowledge assistant that helps you work faster and smarter.

You can use it for everything from analysing documents and drafting reports to finding information and automating repetitive tasks.

Integrated with your apps, capable of understanding meetings and completing tasks in other tools, Sana AI won’t just change the way you access knowledge. It’ll change the way you work.

Key reads

Plane truth. Fatal crashes, doors falling off mid-flight… In a drive towards efficiency and increasing its share price at all costs, Boeing neglected its core mission — safety. Losing sight of its social purpose, it cut corners by outsourcing or laying off and sidelining “expensive” experts whose tacit knowledge and culture were the very thing that led to it becoming the world’s largest aircraft manufacturer in the first place. Eventually, the firm found itself assuming “many of the roles traditionally played by its primary regulator, an arrangement that was ethically absurd”. The tech sector should take notes. If firms are to self-regulate, they will need robust internal processes. Some things may still require an external regulator. A culture of safety is something that resides not in contractual checklists but in behaviours, relationships and values — things that may not be easily (or ever) codified. (As an aside, I don’t fly on the 737-Max when travelling in the US or Asia.)

Middlemen. Microsoft has made yet another sizeable investment, this time $1.5 billion into Abu Dhabi group G42, with its president Brad Smith gaining a seat on the board. Arranged by the US Department of Commerce, the deal is a win for the Biden administration, too. G42 has divested from ByteDance and severed links to Chinese hardware suppliers. These middle powers could find themselves as domiciles for “training runs”, whereby AI models are trained in countries like UAE or Saudi Arabia, where energy is cheap and there are “less stringent privacy protections”. Japan is also a major player in this rivalry: it houses Ajinomoto, a company originally known for creating MSG, but which now dominates the global supply of a critical semiconductor component called ABF. Both the US and China are desperate to reduce their reliance on the company for their own chip industries. The US has the advantage of a solid relationship with Japan, cemented by their common interest in limiting China’s technological prowess. However, as we showed in EV468, China is sidestepping many obstacles, such as US-imposed restrictions, for example, by churning out an AI processor cheaper than GPUs. If you want to understand geopolitics, follow the microchips.

Notes from the future. What lessons might you learn after churning through half a billion tokens? Ken Kantzer shared what he learned after integrating several LLM-heavy features into his B2B platform. One broad lesson we also examined in our Promptpack about “conversation engineering” is that less is more when it comes to prompting. Scaling laws mean that the more data a model is trained on, the better it tends to perform. However, when using an LLM, limiting how much context you give it keeps it focused and yields better results. The second useful lesson is that most applications don’t require fancy tools like LangChain, as the API from models like ChatGPT is sufficient for most use cases. Using an experimental approach is key when implementing AI tools into an organisation. Introduce some tools slowly, check if they work, keep the models focused by not overwhelming them with new information, and figure out what works for you.

Newsreel

China orders Apple to remove WhatsApp, Signal and Telegram from the App Store.

Google is set to spend more than $100 billion developing AI.

Meta releases Meta Llama 3, its new generation of open-source LLMs.

Microsoft introduces VASA-1, a model capable of generating realistic videos of humans speaking from a single image and audio recording.

Netflix’s profits surge as it clamps down on password sharing.

The Future of Humanity Institute — a pioneer of AI existentialism — has shut down.

The UAE experiences its heaviest rains in 75 years of record-keeping.

The Colorado legislature approves a bill to protect neural data. For a deep dive, see my conversation with Professor Nita Farahany.

Data

The share of US tech jobs located in California has fallen by around three percentage points since 2020, hitting the lowest levels in more than a decade. via

The average 25-year-old American Gen Z-er earns over 50% more than baby-boomers did at the same age, with an annual household income exceeding $40,000.

Around 40% of energy companies report that they are struggling to fill open positions in key areas such as installations and manufacturing. via

BYD’s average net profit per car sold is approximately $1,250, which is significantly lower than Tesla’s impressive $8,250 net profit per vehicle.

EVs have driven a $2.4 billion reduction in electricity rates for all consumers in the US in the 11 years to 2021.

Bonney et al. found that the percentage of businesses using some sort of AI grew from 3.7% in September 2023 to 5.4% in February 2024, and is predicted to reach around 6.6% by autumn 2024.

Finland is the world’s happiest country, according to the World Happiness Report.

Short morsels to appear smart at dinner parties

👀 Scientists have found that climate change will reduce the world’s income by 19% in the next 26 years, regardless of our future emissions.

🐎 The surprising social structures that enabled the Mongols to conquer Eurasia. (Podcast)

🦿 Boston Dynamics retires its renowned hydraulic Atlas humanoid to introduce a fully electric successor.

🤷🏻 Oops… A UX design flaw on the UK’s new divorce portal is blamed for an unintended divorce.

⚡️ A deep dive into PCSEL (“pick-cell”), a tiny laser that can melt steel.

🔋 New charging algorithm extends the life of li-ion batteries.

😬 What happens when you quit Ozempic?

🙏🏽The narratives of shared biology and shared experiences are shown to strengthen psychological bonds with humanity at large.

End note

First Instagram. Now X/Twitter but for different reasons. I spent 15 years on Twitter, using it as a primary source for my professional development, following experts in tech, SaaS, venture capital, semiconductors, EVs, batteries, regulation, genomics, synbio, science, history and much, much more. Back in 2009, my previous company built one of the first comprehensive directories of expertise of Twitter users.

Twitter has often battled with signal-to-noise. Justin Bieber was a common problem, his fans swamping timelines. Then “retweet-to-win” spam blighted us. Steps to stop bots tackled much of that. The growing culture wars and Trump’s presidential term somewhat broke the platform: the vituperative tone of American culture bled over expert commentary. But even that slowly toned down.

Many of the experts I used to track use the platform less. I acknowledge that this might not be due to platform changes. They might just be older, busier, or have better things to do. My subjective experience on Twitter, the quality of things I learn, is poorer than it has been in years. It is not devoid of utility, but far worse than it has been. And the noise that surrounds those insights is dirtier, shillier.

I expect that trend to continue, although for now, X is just useful enough to track some scientists, analysts and experts I rate on certain subjects.

I’m on Threads but not posting that much and, of course, LinkedIn. Neither of them yet offer the remarkable diversity of expertise that Twitter has historically offered —but since I don’t think Twitter is likely to offer that again, they will have to do.

Have a great week!

A

P.S. If you’re in London on Wednesday, join me in conversation with the FT AI editor Madhumita Murgia at Daunt Books Marylebone at 7pm. Get your ticket here.

What you’re up to — community updates

Professor Kevin Werbach hosted me on his new podcast about AI and accountability. We discussed AI development, power dynamics, regulation and the tug-of-war between control and innovation.

Congrats to Dan Gillmor on his retirement from the Walter Cronkite School of Journalism and Mass Communication and embarking on the next phase of his work in journalism for democracy.

Nat Bullard announces a new venture: Halcyon, an information platform to help professionals make better decisions on energy transition.

Rodolfo Rosini gave an interview about his mission to shape the future of near-zero energy computing.

Alex Kendall and his team at Wayve released Lingo-2, a multimodal AI model that navigates roads and narrates its journey.

Chanuki Illushka Seresinhe is hiring a Founder Associate to join her AI startup.

Mark Sherwood-Edwards launched a newsletter about the intersection of AI and legal issues.

Christian Vuye launched AI product Assista on Product Hunt this week.

Share your updates with EV readers by telling us what you’re up to here.

Floating Point Operations (FLOAT). 10^26 is roughly 5x the training compute used for GPT-4.

A great weekly as usual, Azeem, but I hope no one misinterprets your contrast of contractual checklists with a culture of safety. Checklists are critical to safety. But, they only work when there also is a commitment to safety. I avoid unsafe situations, e.g., in medicine, but I also avoid situations where a checklist is appropriate but is not followed universally. Both are essential. Atul Gawande has written well on this topic.